Six months ago a vendor showed you a demo. The AI read a contract, pulled the key terms, flagged the risky clauses, and generated a summary your legal team agreed with. You ran a pilot on fifty documents and the results looked good enough to build a business case around. You signed a contract, kicked off a rollout, and told the board that AI was going to save the company $400,000 a year in review time.

That was five months ago. The rollout is now "two weeks out." It has been two weeks out since February.

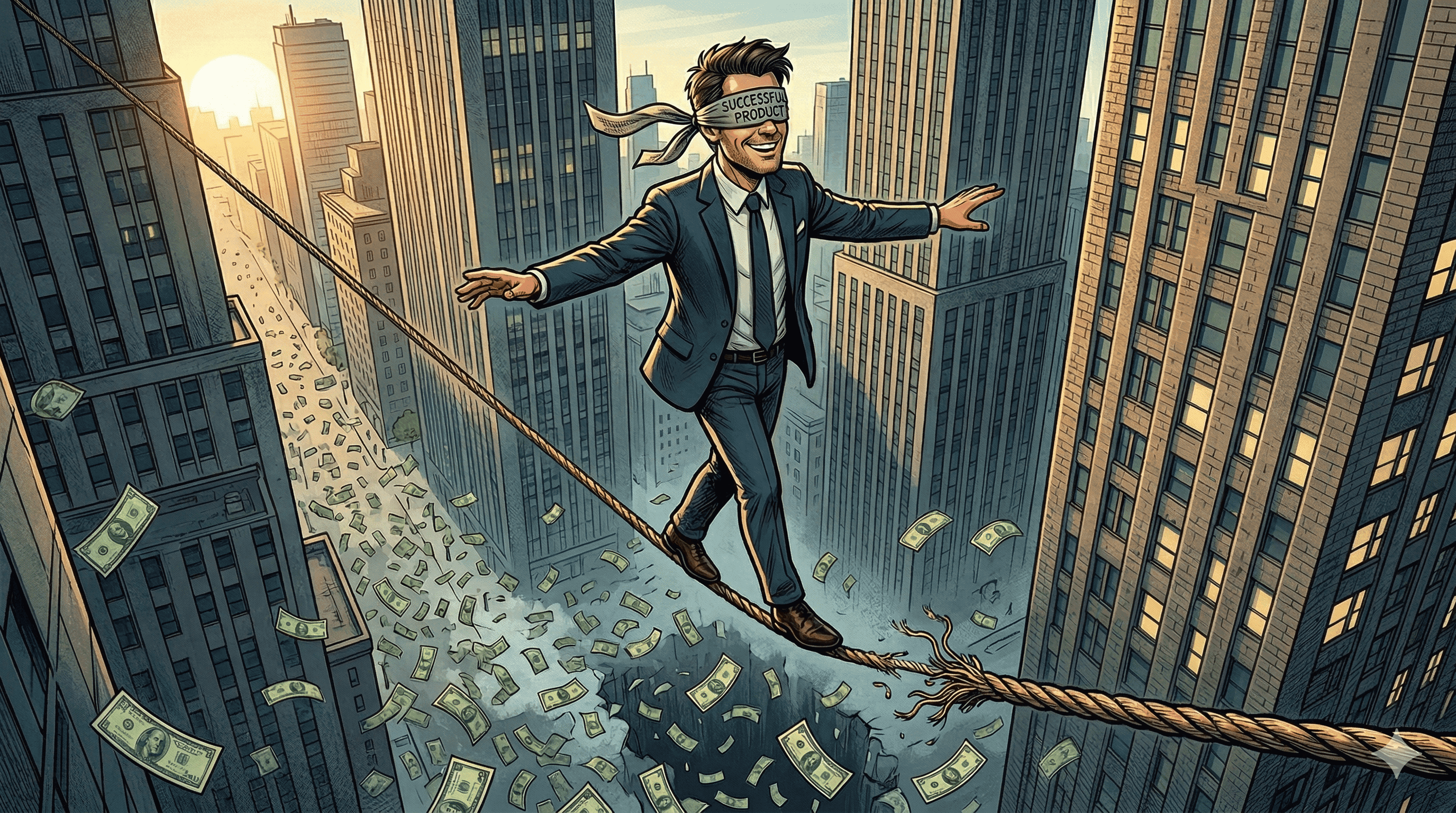

If any part of that sounds familiar, you are not alone, and the model is not the problem. The gap between an AI pilot that works and an AI system that runs your business is enormous, and almost nobody prices it in before they sign.

The Demo Tax

Every AI demo you see, whether from a vendor or from your own team, is optimized for the happy path. The document is clean. The user's question is well-formed. The example data was cherry-picked during setup. Nothing has timed out, nothing has rate-limited, nothing has returned an unexpected format.

That demo is showing you the model. What it is not showing you is the system that model has to live inside once real work starts flowing through it. And that system, not the model, is where AI rollouts fail.

We call this the demo tax: the eighty percent of the work that sits between a working prototype and a production system, and that almost nobody quotes for in the pilot phase.

What's Actually in the Other 80%

When a real AI system goes into production inside a real business, it has to do a lot more than call a model. Here is an incomplete list of what has to exist before you can trust the output.

Data pipelines that feed the model the right thing. The pilot probably ran on a folder of fifty PDFs someone uploaded. Production will need to pull documents out of your document management system, your email, your CRM, or three of those at once. Someone has to build that, keep it running, and deal with the files that do not parse.

Error handling and fallbacks. In the demo, the model always returned something sensible. In production, it will occasionally return nothing, return the wrong thing, or time out. Your system needs to know what to do in each case, and the answer is almost never "show an error and stop."

Evaluations you can trust. The pilot was evaluated by someone comparing the output to their gut. Production needs a repeatable way to measure whether the system is actually doing its job across hundreds or thousands of cases, and a way to catch regressions when you swap models or change a prompt.

Human in the loop where it matters. For anything with real consequences, a human has to be in the decision path. That means a review queue, a UI, a way to override the model, and a way to feed those overrides back in so the system gets better over time.

Monitoring and observability. You need to know, right now, whether the thing is working. Not "the server is up" working, but "the outputs are still accurate" working. That requires dashboards, alerts, and a team that knows what to do when an alert fires.

Security and access control. The pilot ran under one person's account. Production needs to enforce who can see what, log every action, and survive an audit. If your data is sensitive, add another layer for compliance.

Integration with the systems the work actually happens in. Nobody wants to log into a new AI tool to do their job. Production means the AI shows up inside the CRM, the ticketing system, or the inbox people already use. That integration is usually the most expensive part of the rollout, and it is almost never in the pilot scope.

That list is not exotic. It is the baseline for any production AI feature, and none of it was in the demo you saw.

Why Vendors Don't Warn You

Most AI vendors are paid on the pilot, not the outcome. Their incentive is to get you excited, get you to sign, and hand you off. The hard work of making the system survive your real data, your real users, and your real compliance requirements lands on your side of the line, and you usually do not discover it until the rollout is months in.

This is not malice. It is a structural mismatch between what a vendor is built to sell and what your business actually needs to run. The vendor sells a model. You need a system.

What a Technical Partner Does Differently

The reason VantaSoft operates as a technical consultancy rather than a dev shop is exactly this kind of gap. A dev shop builds what you ask for. A technical partner looks at the demo, asks what the system around it has to look like in production, and scopes the actual work before anyone commits to a timeline.

When we take on an AI rollout, the pilot is treated as a scoping exercise, not a deliverable. The deliverable is a production system, and we own the decisions that make that system work: how it handles edge cases, how it gets evaluated, how it integrates into the business, and how your team can operate it without us in the room.

If you already have a pilot that worked and a rollout that is stalling, the fix is almost never a better prompt or a bigger model. It is a system design conversation that should have happened before the pilot, and that you can still have now.

Questions to Ask Before You Greenlight the Rollout

If you are about to commit budget to an AI rollout, these questions will surface most of the hidden work before it becomes a problem.

1. What exactly happens when the model returns the wrong answer, and who notices?

2. Where does the data come from in production, and who owns that pipeline when it breaks?

3. How will we measure whether the system is still working six months from now?

4. Which systems does this need to integrate with, and who is building those integrations?

5. What does a human review queue look like, and whose team runs it?

6. What is our plan when the model, the API, or the vendor changes underneath us?

If the answers are vague, the rollout is not ready, and no amount of pilot success will change that.

The good news is that none of this is unknowable. It is just work, and it is work that needs to happen before the contract gets signed, not after. If you would rather have that conversation with a partner whose job is to own the system rather than sell you a model, that is the conversation we have every week with founders and operators who are trying to put AI into production.

.png&w=3840&q=75)